First some background:

I’ve become increasingly aware of ‘free’ services like Slack (but facebook and Google also fit into this category). While they do offer free  convenient services, the true cost is to your privacy and security. Having some technical knowledge means that I can have the convenience and features of their platforms without having to sacrifice any of the above – it’s the best of both worlds!

convenient services, the true cost is to your privacy and security. Having some technical knowledge means that I can have the convenience and features of their platforms without having to sacrifice any of the above – it’s the best of both worlds!

My notes below will only cover some of the more difficult aspects of configuring apache and how I circumvented let’s encrypt’s process of creating and accessing hidden directories. The Mattermost documentation is your friend too; their setup guides were accurate and effective. To anyone reading this: I strongly suggest setting up a test server first before attempting to create a production system from scratch.

Setting up Mattermost:

As stated above, documentation is your friend. I set up the system on a test VM and was able to get it running with minimal fuss. I created a mysql user and database for the installation on my production web server using phpmyadmin and from there, the rest of the configuration was from within Mattermost itself. Mattermost encourage you to use Nginx and PostgreSQL. To configure MySQL in Mattermost, no changes to the SQLSetting are needed except the DataSource directive which you will need to modify to suit your username/password/database that you setup.

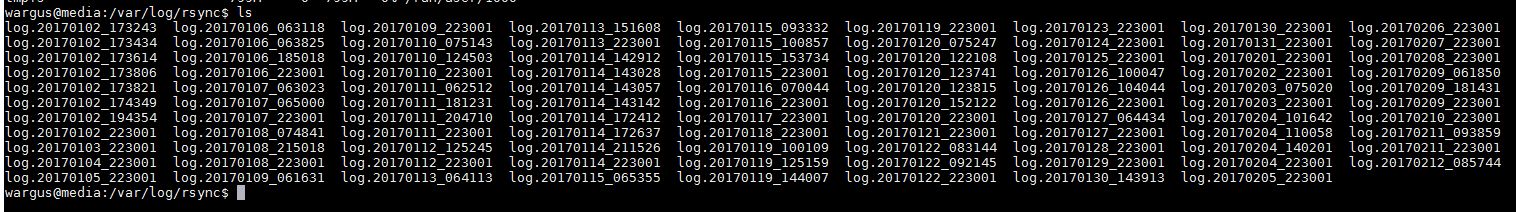

Once I was satisfied with the setup I migrated the directory structure from the testbed to the production server and setup the init scripts as per the mattermost documentation so it runs as a service under it’s own account. I will stress that if you do that, be sure to check the data directory directive so that the right locations are accessible. If you have any trouble with Mattermost the logs are a good place to start looking for the problem. 😉

Setting up Let’s Encrypt:

This is really a two stage process. The first stage is to setup a sub-domain using your DNS provider. I use NoIP, as I can use their client to update my dynamic IP address if  and when my internet connection drops out.

and when my internet connection drops out.

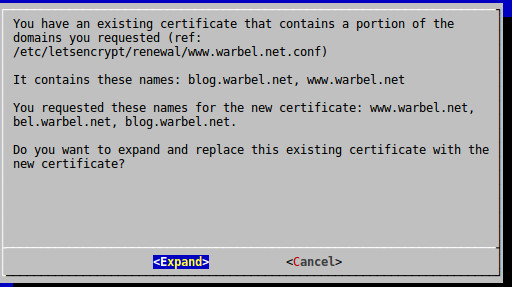

As Mattermost runs on a high port number and apache has not been configured as a reverse proxy just yet. I needed to run lets encrypt in standalone mode. In this mode, letsencrypt acts as its own http server in order to verify you have control over the domains you’re trying to create ssl certificates for. The commands I ran looked like this:

sudo service apache2 stop

sudo letsencrypt certonly –standalone -d www.warbel.net -d bel.warbel.net -d blog.warbel.net -d mattermost.warbel.net

sudo service apache2 start

Let’s encrypt recognized that I needed to add the new domain mattermost to my list of sites and updated the certificate accordingly.

Configuring Apache:

Originally I had intended to setup Mattermost as a subdirectory on my primary domain which was in keeping with my previous projects. Unfortunately that seemed impossible. In the end it was easier to setup a sub-domain and then configure apache with a new site. I had to do some serious googling to find a semi-working config. Mattermost uses web-sockets and application program interfaces (APIs) which do not play well with reverse proxies out of the box. Furthermore, as Let’s Encrypt had already reconfigured components of Apache, I had to modify what I found to match with my pre-existing sites.

I created a new site in /etc/apache2/sites-available/ called mattermost.warbel.net.conf and working off this configuration file as an example created the below:

<VirtualHost mattermost.warbel.net:80>

ServerName mattermost.warbel.net

ServerAdmin xxxx@warbel.net

ErrorLog ${APACHE_LOG_DIR}/mattermost-error.log

CustomLog ${APACHE_LOG_DIR}/mattermost-access.log combined

# Enforce HTTPS:

RewriteEngine On

RewriteCond %{HTTPS} !=on

RewriteRule ^/?(.*) https://%{SERVER_NAME}/$1 [R,L]

</VirtualHost>

<IfModule mod_ssl.c>

<VirtualHost mattermost.warbel.net:443>

SSLEngine on

ServerName mattermost.warbel.net

ServerAdmin xxx@warbel.net

ErrorLog ${APACHE_LOG_DIR}/mattermost-error.log

CustomLog ${APACHE_LOG_DIR}mattermost-access.log combined

RewriteEngine On

RewriteCond %{REQUEST_URI} ^/api/v1/websocket [NC,OR]

RewriteCond %{HTTP:UPGRADE} ^WebSocket$ [NC,OR]

RewriteCond %{HTTP:CONNECTION} ^Upgrade$ [NC]

RewriteRule .* ws://127.0.0.1:8065%{REQUEST_URI} [P,QSA,L]

RewriteCond %{DOCUMENT_ROOT}/%{REQUEST_FILENAME} !-f

RewriteRule .* http://127.0.0.1:8065%{REQUEST_URI} [P,QSA,L]

RequestHeader set X-Forwarded-Proto “https”

<Location /api/v1/websocket>

Require all granted

ProxyPassReverse ws://127.0.0.1:8065/api/v1/websocket

ProxyPassReverseCookieDomain 127.0.0.1 mattermost.warbel.net

</Location>

<Location />

Require all granted

ProxyPassReverse https://127.0.0.1:8065/

ProxyPassReverseCookieDomain 127.0.0.1 mattermost.warbel.net

</Location>

ProxyPreserveHost On

ProxyRequests Off

SSLCertificateFile /etc/letsencrypt/live/www.warbel.net/fullchain.pem

SSLCertificateKeyFile /etc/letsencrypt/live/www.warbel.net/privkey.pem

Include /etc/letsencrypt/options-ssl-apache.conf

</VirtualHost>

</IfModule>

The main difference being that the ssl virtualhost needed to be contained within the ssl module configuration. I’ll also point out that there was a typo in the last proxypassreverse directive- the url was missing the https that stopped the websites from pushing new chat messages automagically to clients connected to the server.

Enable the site with:

sudo a2ensite mattermost.warbel.net

and reload or restart the server – you should have a working Mattermost server.

convenient services, the true cost is to your privacy and security. Having some technical knowledge means that I can have the convenience and features of their platforms without having to sacrifice any of the above – it’s the best of both worlds!

convenient services, the true cost is to your privacy and security. Having some technical knowledge means that I can have the convenience and features of their platforms without having to sacrifice any of the above – it’s the best of both worlds! and when my internet connection drops out.

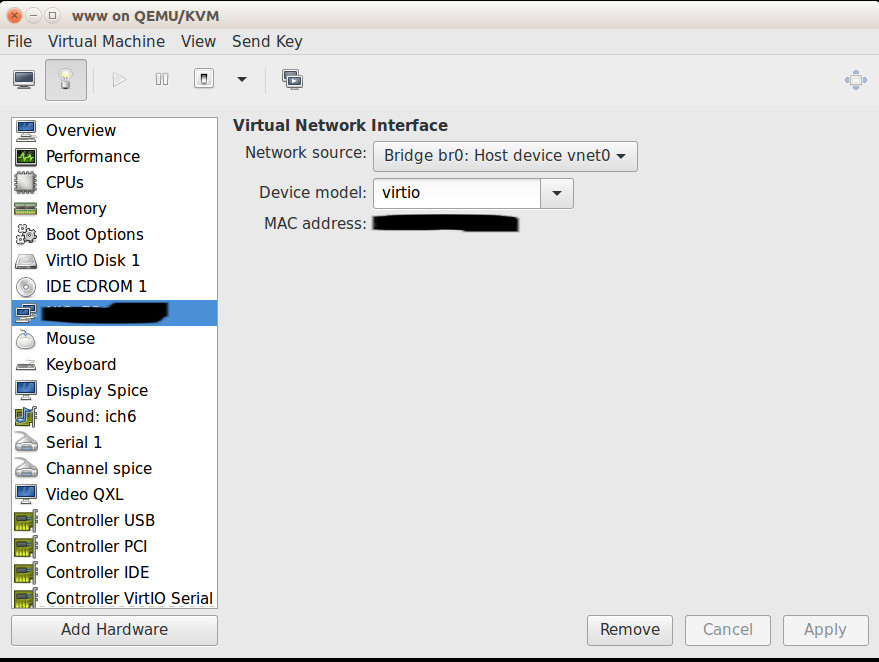

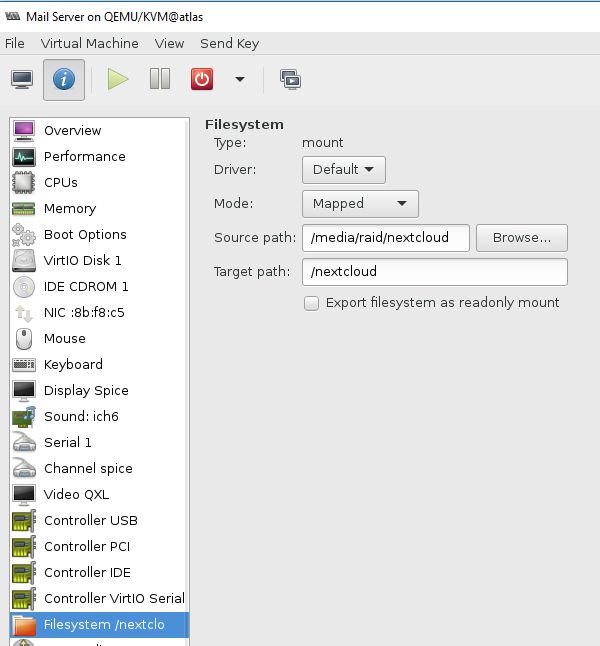

and when my internet connection drops out. As written previously: There are performance benefits to be had by switching from VirtualBox to KVM. And now, after making the switch I can firmly say that not only are the performance benefits noticeable, the configuration of automatic startup and, prima facie, backups, seems to be much easier to establish and use.

As written previously: There are performance benefits to be had by switching from VirtualBox to KVM. And now, after making the switch I can firmly say that not only are the performance benefits noticeable, the configuration of automatic startup and, prima facie, backups, seems to be much easier to establish and use. manager.

manager.